TL;DR:

- Treating data entry outsourcing as a simple typing task leads to costly errors and inefficiencies for growing companies.

- Effective outsourcing integrates structured workflows, precise SLAs, multilingual validation, and layered quality controls to ensure clean, compliant data across markets.

Treating data entry outsourcing as a glorified typing pool is one of the most expensive mistakes a growing company can make. For mid-sized and large businesses in telecom, SaaS, and e-commerce, data entry sits at the intersection of market expansion, compliance, and operational speed. Get it right and you gain a genuine competitive edge in every market you enter. Get it wrong and you spend months cleaning up corrupted records, mismatched customer profiles, and integration failures that stall your growth. This article walks you through vendor selection, workflow modeling, quality control, and scaling strategies that actually work.

Table of Contents

- Why data entry outsourcing is vital for global businesses

- Modeling and integrating workflows: Beyond basic data entry

- Structuring RFP and onboarding: Evaluation, SLAs, and common pitfalls

- Layered quality control: Ensuring clean, usable data

- The missed opportunity most companies overlook

- Scale smarter with expert multilingual data entry support

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Strategic outsourcing matters | Treat data entry as a core process, not just a cost-cutting move, to maximize long-term business value. |

| Workflow modeling is critical | Model your full data lifecycle and integration points before selecting a vendor. |

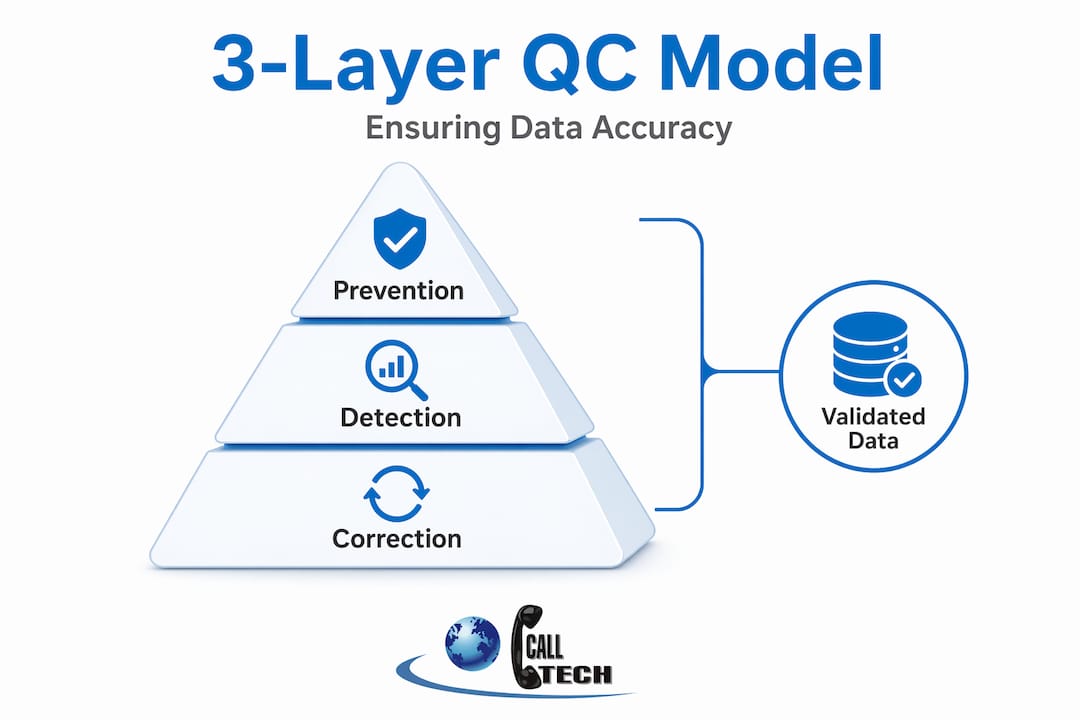

| Success depends on quality control | Apply layered QC steps—prevent, detect, correct—with pilot testing for best results. |

| Clear RFPs prevent headaches | Define scope, SLAs, and compliance needs upfront to ensure vendor alignment and minimize risk. |

| Multilingual expertise drives growth | Choose a partner with proven multilingual capacity to support your international expansion. |

Why data entry outsourcing is vital for global businesses

Having set the stage, it is crucial to understand why global businesses cannot afford to treat data entry outsourcing as a transactional commodity. The stakes are simply too high, and the operational surface area is too wide.

When you expand into new European markets, every customer record, billing entry, and product catalog item needs to be processed accurately in the local language. A German telecom subscriber and a Polish SaaS user generate data that looks very different at the input level. Character sets differ. Address formats differ. Regulatory fields differ. Multilingual data entry is not a nice-to-have feature. It is a foundational requirement for any company serious about international growth.

Outsourcing this function brings three advantages that are often underestimated:

- Flexibility to scale rapidly: A vendor with pre-trained multilingual teams can add capacity for a new market launch within weeks, not quarters.

- Access to specialized expertise: Language proficiency, local compliance knowledge, and format-specific processing skills are built into the vendor's core offering rather than something your HR team has to recruit for.

- Shared accountability: When procurement is handled correctly, the vendor is contractually responsible for output quality, not just hours worked.

- Cost structure optimization: Moving from fixed headcount to variable capacity means your data processing costs flex with actual business volume.

- Reduced internal management burden: Qualified vendors bring their own QC infrastructure, training, and process governance.

That last point matters more than most buyers realize. Many companies approach outsourcing administrative services with cost reduction as the only metric. Cost savings are real, but they are a byproduct of operational efficiency, not the goal itself.

"An RFP should include measurable evaluation criteria and explicitly define SLAs/KPIs and remediation/escalation paths. Unclear scope and missing SLA/KPI definitions increase vendor bid divergence and accountability gaps." — RFP process for outsourcing

This is where the commodity mindset breaks down. When you treat data entry outsourcing as a pure cost play, you issue vague RFPs. Vague RFPs attract wildly inconsistent bids. Inconsistent bids make it impossible to evaluate vendors on equal terms, and the lowest bidder wins by default. That vendor almost always underdelivers. The scalable outsourcing methods that actually work are built on precise scope definition and mutual accountability, not just the cheapest price per record.

Modeling and integrating workflows: Beyond basic data entry

Now that we have established why outsourcing is central, let us look at what actually happens in a modern data entry workflow. Spoiler: it is nothing like someone typing from a printed sheet.

Data arrives in formats you cannot always predict. A single e-commerce operation might receive product information as PDFs from suppliers, scanned handwritten forms from regional distributors, Excel spreadsheets from logistics partners, and unstructured emails from vendor contacts. Each format requires a different ingestion approach before a single field ever reaches your database.

Then there are the integration targets. If your data needs to land cleanly in a CRM, an ERP, and a business intelligence platform simultaneously, each system has its own field requirements, data type rules, and relationship structures. This is not a typing problem. It is a data engineering problem that happens to involve human operators.

| Traditional data typing | Workflow-based ingestion |

|---|---|

| Operator reads source, types into form | Structured intake captures format metadata first |

| No validation at entry point | Validation rules fire before data is committed |

| Errors discovered downstream | Errors caught and flagged at point of entry |

| Single format assumed | Multi-format parsing handled by workflow logic |

| No audit trail by default | Every action logged for compliance review |

| Rework done manually | Correction loops built into the process |

The validation layer is where most vendors fall short. Duplicate detection, format checks, and anomaly spotting are not optional extras. They are the mechanism that prevents bad data from entering your systems in the first place. An e-commerce data entry case with multiple file formats and integration targets makes clear that modeling ingestion-to-validation workflows is far more important than optimizing typing labor.

Validation typically covers three sub-functions:

- Duplicate detection: Catching the same record entered more than once, especially critical when multiple data sources feed the same system.

- Format validation: Ensuring phone numbers, postal codes, dates, and currency fields conform to target system requirements.

- Anomaly spotting: Flagging records that fall outside expected value ranges before they corrupt downstream analytics.

When CRM integration for multilingual support is part of your requirements, these validation rules need to be configured per language and per market. A French address format is not the same as a Swedish one, and your vendor needs to handle both.

Pro Tip: Before finalizing a vendor agreement, ask them to show you their ingestion-to-validation workflow for a specific input format relevant to your data. A vendor who can walk you through this in detail is a vendor who actually has the infrastructure. One who deflects to "we handle all formats" probably does not.

Structuring RFP and onboarding: Evaluation, SLAs, and common pitfalls

Workflow complexity means that how you evaluate and onboard vendors is mission-critical. Let us break down the RFP process and its most common traps.

A well-structured RFP for data entry outsourcing is not a price comparison document. It is a capability assessment. These are the components that must be present:

- Scope definition: Specify every input format, every target system, every language, and every data type. Leave nothing to assumption.

- Measurable evaluation criteria: Define how you will score vendor responses. Accuracy rate benchmarks, turnaround time expectations, and language coverage requirements should all be scored, not subjectively judged.

- SLA specifications: Define timeliness thresholds, minimum accuracy rates (typically 99% or higher for financial or customer-facing data), and acceptable error tolerances by data category.

- KPI definitions: These are the ongoing metrics you will track post-onboarding. Examples include records processed per hour, error rate per 1,000 records, and time-to-escalation for flagged anomalies.

- Remediation and escalation paths: What happens when the vendor misses an SLA? How quickly must errors be corrected? Who is the escalation contact? These should be written into the contract, not discussed informally.

- Compliance requirements: Data privacy regulations, particularly GDPR for European markets, must be explicitly addressed. Ask for evidence of compliance training and data handling protocols.

The most common RFP pitfalls in outsourcing procurement are omitting SLA/KPI definitions and focusing exclusively on the lowest price. Both errors produce the same outcome: a vendor who wins the contract and then underperforms because there is no measurable standard to hold them to.

Onboarding is equally critical and often underplanned. A structured onboarding period should include:

- A parallel processing phase where the vendor works alongside your current process so outputs can be compared directly.

- Documented standard operating procedures (SOPs) shared and signed off by both parties.

- A calibration session where sample records are processed and reviewed jointly before live data is touched.

- Clear go/no-go criteria that determine when the vendor is ready to operate independently.

Choosing a partner experienced in customer experience through outsourcing and back office outsourcing means they come prepared with onboarding frameworks rather than reinventing the process for every new client.

Pro Tip: Require vendors to submit a completed SLA/KPI template as part of their RFP response. If they push back on this, it tells you everything you need to know about how they handle accountability.

Layered quality control: Ensuring clean, usable data

Once a vendor is selected, ongoing success depends on rigorous, layered quality control. This is not a set-and-forget process. It requires active management structured around three distinct control layers.

| QC layer | Mechanism | Timing | Goal |

|---|---|---|---|

| Layer 1: Prevention | Validation rules, standardized templates, intake checklists | Before data entry begins | Block errors at source |

| Layer 2: Detection | Spot-checking, reconciliation, automated validation sweeps | During and immediately after entry | Catch errors before they propagate |

| Layer 3: Correction | Error logging, root cause analysis, process adjustment | After detection | Fix errors and prevent recurrence |

Each layer serves a distinct function and depends on the layers before it. Prevention reduces the volume of errors that reach detection. Detection reduces the volume that require correction. Without all three, you end up managing symptoms instead of the actual problem.

The three-layer QC approach relies on vendors having the infrastructure to implement prevention through validation rules and templates, detection through spot-checking and automated reconciliation, and correction through structured error logging and root cause processes.

"Pilots that mirror real data and edge cases are essential. A controlled test that uses sanitized or simplified data will not reveal the vendor's true capacity to handle the complexity of live operations." — How to outsource data entry quality control

This is why pilot projects are non-negotiable in the evaluation process. A pilot designed to mirror your actual data complexity, including edge cases, unusual formats, and multilingual fields, tells you far more than any vendor presentation ever will.

The pilot should run for a defined period, typically two to four weeks, and produce a report that covers:

- Error rate by data category and input format.

- Processing speed relative to agreed benchmarks.

- Escalation frequency and resolution time.

- Compliance with SOPs and audit logging requirements.

Linking your pilot results directly to your SLA framework means you go into the live engagement with a calibrated baseline, not a hope. Working with outsourcing providers for global support who treat pilots as standard practice rather than an optional extra signals a mature operations mindset.

SOPs also play a critical role in auditability. Every data handling procedure should be documented so that any operator can follow it and any auditor can review it. This is especially important in regulated industries like telecom and financial SaaS where data handling records may be subject to external review.

The missed opportunity most companies overlook

Here is the frank assessment that most outsourcing guides skip: the companies that see the greatest return from data entry outsourcing are not the ones who negotiated the lowest per-record price. They are the ones who engineered the engagement as a process and integration advantage.

When you commoditize data entry, you select for cost and ignore everything else. You get a vendor who types fast and checks nothing. The data lands in your systems looking clean but harboring silent errors that compound over months. By the time you notice the problem, your customer database has duplicates, your analytics are skewed, and your market responsiveness has suffered in ways that are genuinely hard to quantify.

The companies that treat vendors as workflow partners see something different. They co-design the validation rules. They share their CRM and ERP field requirements in detail. They run calibration sessions that identify edge cases before they become live problems. The result is not just lower error rates. It is faster onboarding for new markets, cleaner data for business intelligence, and a vendor who can flag anomalies proactively rather than waiting to be caught.

Data onboarding and compliance are competitive levers. In markets where GDPR applies, the difference between a vendor who has documented data handling procedures and one who does not is the difference between a clean audit and a regulatory incident. That is not a checkbox. That is risk management.

Boosting efficiency through outsourcing does not happen by accident. It happens when decision-makers stop treating back-office functions as cost centers and start treating them as infrastructure. Data entry, done well, is the foundation that everything from customer analytics to billing accuracy to market segmentation is built on. The companies that understand this are the ones that scale without the constant rework cycles that slow everyone else down.

Scale smarter with expert multilingual data entry support

As you consider your organization's next steps, here is how expert partners can help you operationalize everything covered above.

At CallTech Outsourcing, we have spent nearly 20 years building multilingual back-office and data processing capabilities across more than 15 European languages. Our teams work with telecom, SaaS, and e-commerce companies who need outsourcing multilingual services that go beyond simple task execution. We bring workflow modeling, CRM integration experience, and structured QC frameworks to every engagement. If you are scaling into new European markets and need a partner who treats data accuracy as seriously as you do, our multilingual support for ecommerce and back office solutions teams are ready to talk. Reach out for a tailored consultation and let us map your data entry requirements against a scalable, compliant solution.

Frequently asked questions

What should an RFP for data entry outsourcing always include?

A robust RFP must clearly outline scope, measurable evaluation criteria, SLAs/KPIs, escalation protocols, and compliance expectations. Leaving out SLA/KPI definitions is the single most common procurement mistake that leads to vendor underperformance.

How long does it take to process a typical e-commerce data entry record?

Processing time typically runs 8 to 12 minutes per record, depending on input format complexity and the number of validation steps required at entry.

What is a 3-layer quality control approach?

It divides data quality controls into prevention, detection, and correction, each with specific tools and processes so errors are blocked, caught, and eliminated systematically rather than discovered late in the pipeline.

Why is multilingual capability important for data entry outsourcing?

It ensures data is processed accurately for each target market, which is critical for global SaaS, telecom, and e-commerce businesses where address formats, character sets, and regulatory fields vary significantly by country.

What's a common mistake when selecting a data entry vendor?

Focusing only on price and neglecting detailed SLA definitions or QC testing leads to data errors and misaligned expectations. Omitting SLA/KPI definitions and price-only evaluation are the two pitfalls that most reliably result in costly vendor failures.